Posted on October 17, 2013

Disclaimer: Despite the title, I will discuss both RSS and Atom formats. A more accurate title would be “Content Syndication the least wrong way”, but that is just not as snappy.

Generating a static site? Building your own blog engine? Or otherwise need to configure your own content syndication feed? You may have noticed that RSS is a complete shitshow. RSS grew in the messy organic way that much of the web grew, and was standardized too little and too late, like much of the web. People can’t even agree what the acronym RSS stands for. Most activity on the standard itself stopped years ago, and as a result so did discussion of it. Trying to serve your own feed comes with a number of small pitfalls, and searching for advice on the subject yields a slew of contradicting, badly outdated articles. The top hit searching for “rss content type” is 8 years old. That’s 218 in Internet years.

Let me guide you through this mess. Together we will seek simple solutions that work in today’s world. Of course, if you’re not interested in the details, you can skip to the end for my recommended best practices.

Generating the feed

A feed is nothing more than a simple XML file that lists entries. Whenever a new entry is added, the file is updated. Generating this file is outside the scope of this post. Hopefully you are using a tool that will do it for you. If not, you may need to look at some examples or dig into the spec.

The important thing is that once you have your feed, you use the official validator to ensure that it is a valid feed format. You should only be generating Atom 1.0 or RSS 2.0. There is no reason to use any older versions of the specs.

Once upon a time, a syndication format arose, and it was called RSS. Then people got annoyed because RSS had some shortcomings, and created a better-thought-out standard called Atom. You will need to make a choice about which format you serve. The good news is it doesn’t matter much. Both are perfectly sufficient for the needs of a simple blog or other standard content stream, and as we will see later, both are widely used and widely supported. No modern feed reader will handle one of these formats but not the other.

If your tool only generates one format or the other, your decision is made for you.

Otherwise, choose one. I recommend Atom. It was built with a spec from the beginning, so the right implementation is also the implementation that works. RSS has existed longer, so in theory some tools could lack Atom support, but in practice this isn’t likely. There are echoes of descriptivism vs. prescriptivism here, and as usual I come down cautiously on the prescriptivist side. Atom is conceptually better, so barring any hurdles to its acceptance, I say we use Atom.

Content type

You must serve your feed with a content type that identifies it as a feed. It’s fairly important to get this right, or at least something approximating right. This ensures that browsers and feed readers recognize it as a feed and behave appropriately.

Atom

Your content type should be application/atom+xml. This is the most correct, and will work well with everything. Using text/xml is technically acceptable but too vague to be a great idea.

Your content type should be text/xml.

Given what I just said about Atom feeds, it seems like application/rss+xml would be a better idea. However, this is not a registered MIME type, and while it will probably work, you still should not try it. RSS suffered greatly at the hands of those who loved it, and this content-type mess is one of the greatest legacies of that. Other content types used for RSS in the past have included application/xml, text/html, and text/rss+xml, which are all wrong and should be avoided.

You can easily use curl to check that your feed is being served with the correct content type:

curl -I technotes.iangreenleaf.com/feed.xml

Look through the headers in the response for this:

Content-Type: application/atom+xml

If you are serving your blog from Amazon S3, it will guess (and guess wrong) about the content type it should serve. Give it a hint when uploading the file to prevent this behavior. For example, I use the s3cmd tool and pass it an extra option like so:

s3cmd put --mime-type=application/atom+xml \

_site/feed.xml s3://technotes.iangreenleaf.com

File names

Functionally, it doesn’t matter at all what you name the files you generate. If they are served up with the correct content type, the file name is irrelevant (though some platforms, like S3, use the filename to guess at the content type if it isn’t set explicitly). I suggest you go with something like feed.xml or rss.xml/atom.xml. This is not incorrect, and should result in roughly-correct behavior from text editors, etc.

Auto-discovery

A nifty feature that you definitely want to provide is RSS discovery. Applications, when viewing a page on your site, can automatically find feed links and make them available in some special way. For example, I have an RSS button on my toolbar. If a page I’m on offers a feed, the icon will light up, and clicking on it will open the feed in my preferred feed reader.

No need to search around the page for the link to the feed, my browser has found it for me!

The key to enabling RSS discovery is adding a <link> element inside your <head>. Here is an Atom feed:

<link rel="alternate" type="application/atom+xml"

href="/feed.xml" title="Atom Feed" />

And an RSS feed:

<link rel="alternate" type="application/rss+xml"

title="RSS Feed" href="/feed.xml" />

The rel attribute is very important. It must contain alternate and only alternate, or some clients will stumble.

The type attribute is also important. It must be either application/atom+xml or application/rss+xml. “But wait,” you say, “why are we using application/rss+xml when you just told me that’s not a valid content type?” You’re so cute with your questions. But seriously, don’t use it in the content type header, do use it here, stop asking questions and no one will get hurt.

The title attribute must exist, but may contain whatever you like. Just name it something descriptive.

It’s also possible to offer multiple feeds from one page by using more than one <link>:

<link rel="alternate" type="application/atom+xml"

href="/feed.xml" title="Ian's Blog Feed" />

<link rel="alternate" type="application/atom+xml"

href="./comments.xml" title='Comments on "This Post"' />

If you do this, users will be shown a selection screen to pick the feed they want.

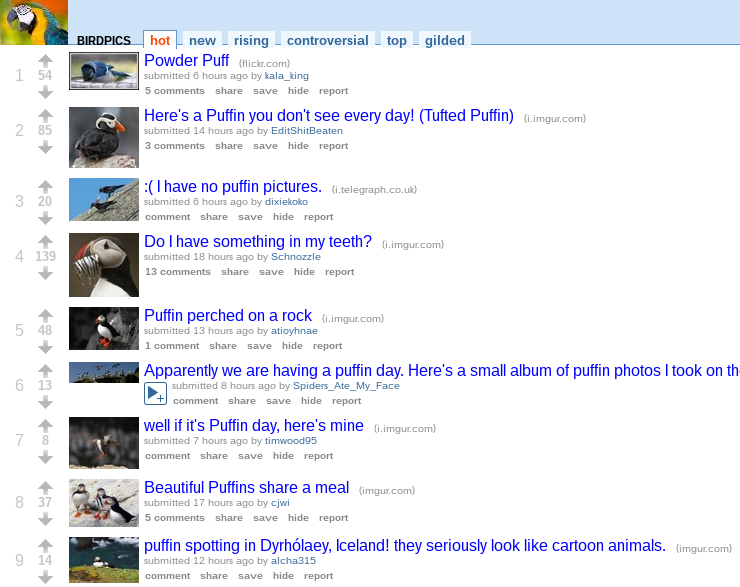

The title attribute is what’s shown here, so make sure you’ve picked good names!

You can even provide links to both an Atom feed and an RSS feed for the same content, and let users choose which format to use. However, I recommend against doing this - more on that later.

The great feed survey

Dealing with a poorly-specified area of the web with a spotty history comes with its share of uncertainty. Several of the lessons in this post were learned the hard way after launching this blog and receiving bug reports. Questions of format choices and content types boil down to which combination is going to work correctly for almost everyone, almost all the time. With most of the literature on this subject badly out of date, it’s hard to determine which compatibility issues still occur and which are effectively moot.

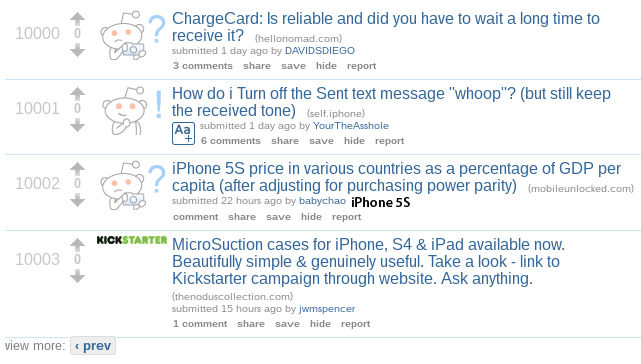

I decided the best way to find out which practices were safe would be to check very popular feeds and see what they did. If something doesn’t work for a significant portion of the web population, I assume these people will have heard about it and taken corrective action. To that end, I conducted an unscientific survey of feeds published by popular blogging platforms and notable citizens of the web. I checked only the feeds made available by autodiscovery.

| Blogger (Atom) |

Atom 1.0 |

application/atom+xml; charset=UTF-8 |

<link rel="alternate" type="application/atom+xml" title="Official Blog - Atom" href="http://googleblog.blogspot.com/feeds/posts/default" /> |

| Blogger (RSS) |

RSS 2.0 |

application/rss+xml; charset=UTF-8 |

<link rel="alternate" type="application/rss+xml" title="Official Blog - RSS" href="http://googleblog.blogspot.com/feeds/posts/default?alt=rss" /> |

| Tumblr |

RSS 2.0 |

text/xml; charset=utf-8 |

<link rel="alternate" type="application/rss+xml" title="RSS" href="http://staff.tumblr.com/rss"/> |

| Wordpress.com |

RSS 2.0 |

text/xml; charset=UTF-8 |

<link rel="alternate" type="application/rss+xml" title="WordPress.com News" href="http://en.blog.wordpress.com/feed/" /> |

| Feedburner Status |

Atom 1.0 |

text/xml; charset=UTF-8 |

|

| Jekyll |

RSS 2.0 |

text/xml |

<link rel="alternate" type="application/rss+xml" title="Jekyll • Simple, blog-aware, static sites - Feed" href="/feed.xml" /> |

| Octopress |

Atom 1.0 |

text/xml |

<link href="/atom.xml" rel="alternate" title="Octopress" type="application/atom+xml"> |

| Jeffrey Zeldman |

RSS 2.0 |

text/html |

<link rel="alternate" type="application/rss+xml" title="Jeffrey Zeldman Presents The Daily Report RSS Feed. Designing with web standards." href="/rss/" /> |

| Eric Meyer |

RSS 2.0 |

text/html |

<link rel="alternate" type="application/rss+xml" title="Thoughts From Eric" href="/eric/thoughts/rss2/full" /> |

| Daring Fireball / John Gruber |

Atom 1.0 |

application/atom+xml |

<link rel="alternate" type="application/atom+xml" href="/index.xml" /> |

| A List Apart |

RSS 2.0 |

text/xml; charset=UTF-8 |

<link rel="alternate" type="application/rss+xml" title="A List Apart: The Full Feed" href="/site/rss" /> |

| 24 Ways |

RSS 2.0 |

text/xml; charset=UTF-8 |

<link rel="alternate" type="application/rss+xml" title="rss" href="http://feeds.feedburner.com/24ways" /> |

| The W3 Consortium |

RSS 2.0 |

text/html |

<link rel="alternate" type="application/atom+xml" title="W3C News" href="/News/atom.xml" /> |

RSS 2.0 is the clear favorite. Still, several important feeds use Atom 1.0, notably the Feedburner status feed and Daring Fireball. This leads me to conclude that either format is perfectly acceptable in today’s web.

Content types

There’s little consensus here. For Atom feeds, text/xml makes an appearance, but several feeds use the most correct application/atom+xml.

In the RSS feeds, most stick with the safe bet of text/xml. I was surprised to discover that text/html is served by web evangelists Jeffrey Zeldman and Eric Meyer, and even more so that is is served by the official W3C news feed. Unless I am mistaken, this content type is just flat-out wrong and should not be used. I wonder if there is a rationale behind their decisions.

Autodiscovery

Every feed surveyed supports autodiscovery. Notably, none of the feeds except Blogger offered a choice of both RSS and Atom in the autodiscovery tags. This is good UI: presenting users with a choice between two functionally interchangeable formats is unhelpful at best and badly confusing at worst. Given the widespread compatibility of both RSS and Atom, you should pick one and serve that by default.

This admonishment is directed at me as well. Until performing this survey, I had been offering both formats through autodiscovery (in the name of user choice). Upon realizing that I was in a serious minority, I reevaluated and realized that I had made a poor decision. From now on I will be pointing to only one format. I will probably continue to serve the other feed format, but will not be advertising it.

In summary

Does your head hurt? Let’s distill these discoveries down to a small set of best practices. Here’s a mildly opinionated guide to serving a successful content feed:

- Use Atom 1.0.

- Generate a file named

feed.xml. Serve it with the content type application/atom+xml.

Put this in the <head> element of your site:

<link rel="alternate" type="application/atom+xml"

href="/feed.xml" title="My Blog Feed" />

title.Try to forget all that you have witnessed here today.

]]>